How to set up an EKS cluster running MongoDB

Follow the AWS instructions on how to build a basic three node K8s cluster in EKS its pretty simple https://docs.aws.amazon.com/eks/latest/userguide/getting-started.html

You should have a three node Kubernetes cluster deployed based on the default EKS configuration.

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

ip-192-168-113-226.us-west-2.compute.internal Ready none 9m v1.10.3

ip-192-168-181-241.us-west-2.compute.internal Ready none 9m v1.10.3

ip-192-168-201-131.us-west-2.compute.internal Ready none 9m v1.10.3

Installing Portworx in EKS

Installing Portworx on Amazon EKS is not very different from installing it on a Kubernetes cluster setup through Kops. Portworx EKS documentation has the steps involved in running the Portworx cluster in a Kubernetes environment deployed in AWS.

Portworx cluster needs to be up and running on EKS before proceeding to the next step. The kube-system namespace should have the Portworx pods in running state.

$ kubectl get pods -n=kube-system -l name=portworx

NAME READY STATUS RESTARTS AGE

portworx-42kg4 1/1 Running 0 1d

portworx-5c6pp 1/1 Running 0 1d

portworx-dqfhz 1/1 Running 0 1d

Creating a storage class for MongoDB

Once the EKS cluster is up and running, and Portworx is installed and configured, we will deploy a highly available MongoDB database.

Through storage class objects, an admin can define different classes of Portworx volumes that are offered in a cluster. These classes will be used during the dynamic provisioning of volumes. The storage class defines the replication factor, I/O profile (e.g., for a database or a CMS), and priority (e.g., SSD or HDD). These parameters impact the availability and throughput of workloads and can be specified for each volume. This is important because a production database will have different requirements than a development Jenkins cluster.

In this example, the storage class that we deploy has a replication factor of 3 with I/O profile set to “db,” and priority set to “high.” This means that the storage will be optimized for low latency database workloads like MongoDB and automatically placed on the highest performance storage available in the cluster. Notice that we also mention the filesystem, xfs in the storage class.

$ cat > px-mongo-sc.yaml << EOF

kind: StorageClass

apiVersion: storage.k8s.io/v1beta1

metadata:

name: px-ha-sc

provisioner: kubernetes.io/portworx-volume

parameters:

repl: "3"

io_profile: "db_remote"

priority_io: "high"

fs: "xfs"

EOF

Create the storage class and verify its available in the default namespace.

$ kubectl create -f px-mongo-sc.yaml

storageclass.storage.k8s.io "px-ha-sc" created

$ kubectl get sc

NAME PROVISIONER AGE

px-ha-sc kubernetes.io/portworx-volume 10s

stork-snapshot-sc stork-snapshot 3d

Creating a MongoDB PVC on Kubernetes

We can now create a Persistent Volume Claim (PVC) based on the Storage Class. Thanks to dynamic provisioning, the claims will be created without explicitly provisioning a persistent volume (PV).

$ cat > px-mongo-pvc.yaml << EOF

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: px-mongo-pvc

annotations:

volume.beta.kubernetes.io/storage-class: px-ha-sc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

EOF

$ kubectl create -f px-mongo-pvc.yaml

persistentvolumeclaim "px-mongo-pvc" created

$ kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

px-mongo-pvc Bound pvc-e0acf1df-9231-11e8-864b-0abd3d2e35a4 1Gi RWO px-ha-sc 19s

Deploying MongoDB on EKS

Finally, let’s create a MongoDB instance as a Kubernetes deployment object. For simplicity’s sake, we will just be deploying a single Mongo pod. Because Portworx provides synchronous replication for High Availability, a single MongoDB instance might be the best deployment option for your MongoDB database. Portworx can also provide backing volumes for multi-node MongoDB replica sets. The choice is yours.

$ cat > px-mongo-app.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mongo

spec:

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

replicas: 1

selector:

matchLabels:

app: mongo

template:

metadata:

labels:

app: mongo

spec:

schedulerName: stork

containers:

- name: mongo

image: mongo

imagePullPolicy: "Always"

ports:

- containerPort: 27017

volumeMounts:

- mountPath: /data/db

name: mongodb

volumes:

- name: mongodb

persistentVolumeClaim:

claimName: px-mongo-pvc

EOF

$ kubectl create -f px-mongo-app.yaml

deployment.extensions "mongo" created

The MongoDB deployment defined above is explicitly associated with the PVC, px-mongo-pvc created in the previous step.

This deployment creates a single pod running MongoDB backed by Portworx.

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

mongo-68cc69bc95-mxqsb 1/1 Running 0 54s

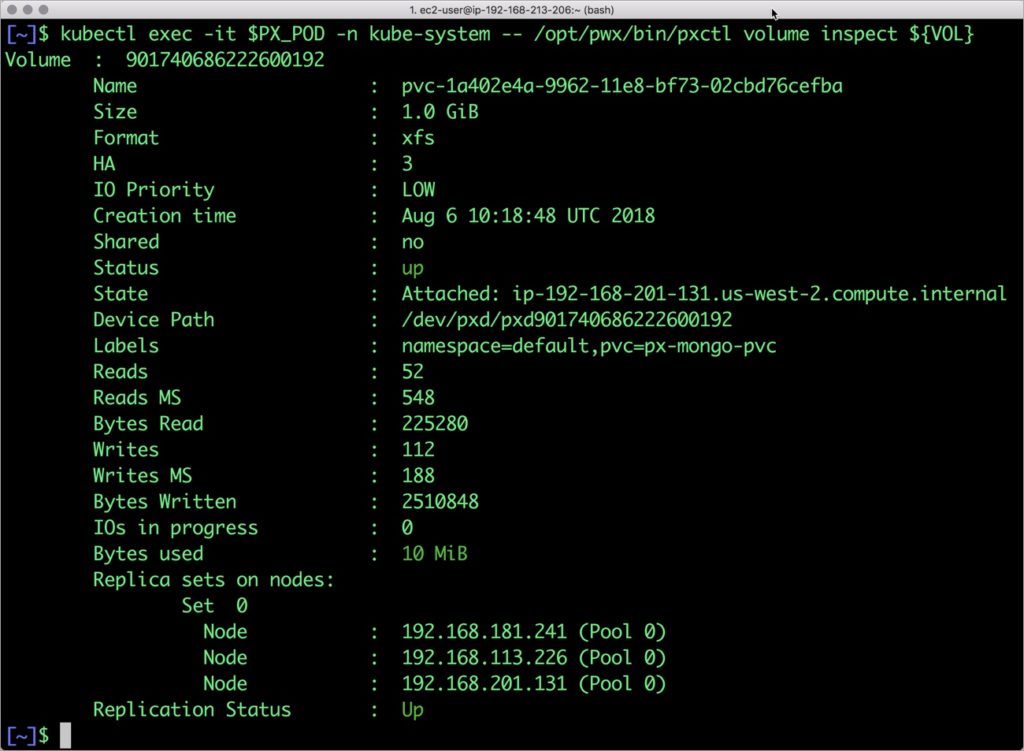

We can inspect the Portworx volume by accessing the pxctl tool running with the Mongo pod.

$ VOL=`kubectl get pvc | grep px-mongo-pvc | awk '{print $3}'`

$ PX_POD=$(kubectl get pods -l name=portworx -n kube-system -o jsonpath='{.items[0].metadata.name}')

$ kubectl exec -it $PX_POD -n kube-system -- /opt/pwx/bin/pxctl volume inspect ${VOL}

Volume : 607995846497198316

Name : pvc-64b57bdd-9254-11e8-8c5e-0253036635a0

Size : 1.0 GiB

Format : xfs

HA : 3

IO Priority : LOW

Creation time : Jul 28 10:53:01 UTC 2018

Shared : no

Status : up

State : Attached: ip-192-168-95-234.us-west-2.compute.internal

Device Path : /dev/pxd/pxd607995846497198316

Labels : namespace=default,pvc=px-mongo-pvc

Reads : 52

Reads MS : 20

Bytes Read : 225280

Writes : 106

Writes MS : 236

Bytes Written : 2453504

IOs in progress : 0

Bytes used : 10 MiB

Replica sets on nodes:

Set 0

Node : 192.168.95.234 (Pool 0)

Node : 192.168.203.81 (Pool 0)

Node : 192.168.185.157 (Pool 0)

Replication Status : Up

The output from the above command confirms the creation of volumes that are backing MongoDB database instance.

Failing over MongoDB pod on Kubernetes

Populating sample data

Let’s populate the database with some sample data.

We will first find the pod that’s running MongoDB to access the shell.

$ POD=`kubectl get pods -l app=mongo | grep Running | grep 1/1 | awk '{print $1}'`

$ kubectl exec -it $POD mongo

MongoDB shell version v4.0.0

connecting to: mongodb://127.0.0.1:27017

MongoDB server version: 4.0.0

Welcome to the MongoDB shell.

…..

Now that we are inside the shell, we can populate a collection.

db.ships.insert({name:'USS Enterprise-D',operator:'Starfleet',type:'Explorer',class:'Galaxy',crew:750,codes:[10,11,12]})

db.ships.insert({name:'USS Prometheus',operator:'Starfleet',class:'Prometheus',crew:4,codes:[1,14,17]})

db.ships.insert({name:'USS Defiant',operator:'Starfleet',class:'Defiant',crew:50,codes:[10,17,19]})

db.ships.insert({name:'IKS Buruk',operator:' Klingon Empire',class:'Warship',crew:40,codes:[100,110,120]})

db.ships.insert({name:'IKS Somraw',operator:' Klingon Empire',class:'Raptor',crew:50,codes:[101,111,120]})

db.ships.insert({name:'Scimitar',operator:'Romulan Star Empire',type:'Warbird',class:'Warbird',crew:25,codes:[201,211,220]})

db.ships.insert({name:'Narada',operator:'Romulan Star Empire',type:'Warbird',class:'Warbird',crew:65,codes:[251,251,220]})

Let’s run a few queries on the Mongo collection.

Find one arbitrary document:

db.ships.findOne()

{

"_id" : ObjectId("5b5c16221108c314d4c000cd"),

"name" : "USS Enterprise-D",

"operator" : "Starfleet",

"type" : "Explorer",

"class" : "Galaxy",

"crew" : 750,

"codes" : [

10,

11,

12

]

}

Find all documents and using nice formatting:

db.ships.find().pretty()

…..

{

"_id" : ObjectId("5b5c16221108c314d4c000d1"),

"name" : "IKS Somraw",

"operator" : " Klingon Empire",

"class" : "Raptor",

"crew" : 50,

"codes" : [

101,

111,

120

]

}

{

"_id" : ObjectId("5b5c16221108c314d4c000d2"),

"name" : "Scimitar",

"operator" : "Romulan Star Empire",

"type" : "Warbird",

"class" : "Warbird",

"crew" : 25,

"codes" : [

201,

211,

220

]

}

…..

Shows only the names of the ships:

db.ships.find({}, {name:true, _id:false})

{ "name" : "USS Enterprise-D" }

{ "name" : "USS Prometheus" }

{ "name" : "USS Defiant" }

{ "name" : "IKS Buruk" }

{ "name" : "IKS Somraw" }

{ "name" : "Scimitar" }

{ "name" : "Narada" }

Finds one document by attribute:

db.ships.findOne({'name':'USS Defiant'})

{

"_id" : ObjectId("5b5c16221108c314d4c000cf"),

"name" : "USS Defiant",

"operator" : "Starfleet",

"class" : "Defiant",

"crew" : 50,

"codes" : [

10,

17,

19

]

}

Exit from the client shell to return to the host.

Simulating node failure

Now, let’s simulate the node failure by cordoning off the node on which MongoDB is running.

$ NODE=`kubectl get pods -l app=mongo -o wide | grep -v NAME | awk '{print $7}'`

$ kubectl cordon ${NODE}

node "ip-192-168-217-164.us-west-2.compute.internal" cordoned

The above command disabled scheduling on one of the nodes.

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

ip-192-168-128-254.us-west-2.compute.internal Ready 3d v1.10.3

ip-192-168-217-164.us-west-2.compute.internal Ready,SchedulingDisabled 3d v1.10.3

ip-192-168-94-92.us-west-2.compute.internal Ready 3d v1.10.3

Now, let’s go ahead and delete the MongoDB pod.

$ POD=`kubectl get pods -l app=mongo -o wide | grep -v NAME | awk '{print $1}'`

$ kubectl delete pod ${POD}

pod "mongo-68cc69bc95-mxqsb" deleted

As soon as the pod is deleted, it is relocated to the node with the replicated data, even when that node is in a different Availability Zone. STorage ORchestrator for Kubernetes (STORK), a Portworx-contributed open source storage scheduler, ensures that the pod is rescheduled on the exact node where the data is stored.

Let’s verify this by running the below command. We will notice that a new pod has been created and scheduled in a different node.

$ kubectl get pods -l app=mongo -o wide

NAME READY STATUS RESTARTS AGE IP NODE

mongo-68cc69bc95-thqbm 1/1 Running 0 19s 192.168.82.119 ip-192-168-94-92.us-west-2.compute.internal

Let’s uncordon the node to bring it back to action.

$ kubectl uncordon ${NODE}

node "ip-192-168-217-164.us-west-2.compute.internal" uncordoned

Finally, let’s verify that the data is still available.

Verifying that the data is intact

Let’s find the pod name and run the ‘exec’ command, and then access the Mongo shell.

POD=`kubectl get pods -l app=mongo | grep Running | grep 1/1 | awk '{print $1}'`

kubectl exec -it $POD mongo

MongoDB shell version v4.0.0

connecting to: mongodb://127.0.0.1:27017

MongoDB server version: 4.0.0

Welcome to the MongoDB shell.

…..

We will query the collection to verify that the data is intact.

Find one arbitrary document:

db.ships.findOne()

{

"_id" : ObjectId("5b5c16221108c314d4c000cd"),

"name" : "USS Enterprise-D",

"operator" : "Starfleet",

"type" : "Explorer",

"class" : "Galaxy",

"crew" : 750,

"codes" : [

10,

11,

12

]

}

Find all documents and using nice formatting:

db.ships.find().pretty()

…..

{

"_id" : ObjectId("5b5c16221108c314d4c000d1"),

"name" : "IKS Somraw",

"operator" : " Klingon Empire",

"class" : "Raptor",

"crew" : 50,

"codes" : [

101,

111,

120

]

}

{

"_id" : ObjectId("5b5c16221108c314d4c000d2"),

"name" : "Scimitar",

"operator" : "Romulan Star Empire",

"type" : "Warbird",

"class" : "Warbird",

"crew" : 25,

"codes" : [

201,

211,

220

]

}

…..

Shows only the names of the ships:

db.ships.find({}, {name:true, _id:false})

{ "name" : "USS Enterprise-D" }

{ "name" : "USS Prometheus" }

{ "name" : "USS Defiant" }

{ "name" : "IKS Buruk" }

{ "name" : "IKS Somraw" }

{ "name" : "Scimitar" }

{ "name" : "Narada" }

Finds one document by attribute:

db.ships.findOne({'name':Narada'})

{

"_id" : ObjectId("5b5c16221108c314d4c000d3"),

"name" : "Narada",

"operator" : "Romulan Star Empire",

"type" : "Warbird",

"class" : "Warbird",

"crew" : 65,

"codes" : [

251,

251,

220

]

}

Observe that the collection is still there and all the content is intact! Exit from the client shell to return to the host.

Performing Storage Operations on MongoDB

After testing end-to-end failover of the database, let’s perform StorageOps for MongoDB on our EKS cluster.

Expanding the Kubernetes Volume with no downtime

Currently the Portworx volume that we created at the beginning is of 1Gib size. We will now expand it to double the storage capacity.

First, let’s get the volume name and inspect it through the pxctl tool.

If you have access, SSH into one of the nodes and run the following command.

$ POD=`/opt/pwx/bin/pxctl volume list --label pvc=px-mongo-pvc | grep -v ID | awk '{print $1}'`

$ /opt/pwx/bin/pxctl v i $POD

Volume : 607995846497198316

Name : pvc-64b57bdd-9254-11e8-8c5e-0253036635a0

Size : 1.0 GiB

Format : xfs

HA : 3

IO Priority : LOW

Creation time : Jul 28 10:53:01 UTC 2018

Shared : no

Status : up

State : Attached: ip-192-168-95-234.us-west-2.compute.internal

Device Path : /dev/pxd/pxd607995846497198316

Labels : namespace=default,pvc=px-mongo-pvc

Reads : 52

Reads MS : 20

Bytes Read : 225280

Writes : 106

Writes MS : 236

Bytes Written : 2453504

IOs in progress : 0

Bytes used : 10 MiB

Replica sets on nodes:

Set 0

Node : 192.168.95.234 (Pool 0)

Node : 192.168.203.81 (Pool 0)

Node : 192.168.185.157 (Pool 0)

Replication Status : Up

Notice the current Portworx volume. It is 1GiB. Let’s expand it to 2GiB.

$ /opt/pwx/bin/pxctl volume update $POD --size=2

Update Volume: Volume update successful for volume 607995846497198316

Check the new volume size.

$ /opt/pwx/bin/pxctl v i $POD

Volume : 607995846497198316

Name : pvc-64b57bdd-9254-11e8-8c5e-0253036635a0

Size : 2.0 GiB

Format : xfs

HA : 3

IO Priority : LOW

Creation time : Jul 28 10:53:01 UTC 2018

Shared : no

Status : up

State : Attached: ip-192-168-95-234.us-west-2.compute.internal

Device Path : /dev/pxd/pxd607995846497198316

Labels : namespace=default,pvc=px-mongo-pvc

Reads : 65

Reads MS : 20

Bytes Read : 278528

Writes : 218

Writes MS : 344

Bytes Written : 3149824

IOs in progress : 0

Bytes used : 11 MiB

Replica sets on nodes:

Set 0

Node : 192.168.95.234 (Pool 0)

Node : 192.168.203.81 (Pool 0)

Node : 192.168.185.157 (Pool 0)

Replication Status : Up

Taking Snapshots of a Kubernetes volume and restoring the database

Portworx supports creating snapshots for Kubernetes PVCs.

Let’s create a snapshot for the PVC we created for MongoDB.

cat > px-mongo-snap.yaml << EOF

apiVersion: volumesnapshot.external-storage.k8s.io/v1

kind: VolumeSnapshot

metadata:

name: px-mongo-snapshot

namespace: default

spec:

persistentVolumeClaimName: px-mongo-pvc

EOF

$ kubectl create -f px-mongo-snap.yaml

volumesnapshot.volumesnapshot.external-storage.k8s.io "px-mongo-snapshot" created

Verify the creation of volume snapshot.

$ kubectl get volumesnapshot

NAME AGE

px-mongo-snapshot 1m

$ kubectl get volumesnapshotdatas

NAME AGE

k8s-volume-snapshot-9e539249-9255-11e8-b018-e2f4b6cbb690 2m

With the snapshot in place, let’s go ahead and delete the database.

$ POD=`kubectl get pods -l app=mongo | grep Running | grep 1/1 | awk '{print $1}'`

$ kubectl exec -it $POD mongo

db.ships.drop()

Since snapshots are just like volumes, we can use it to start a new instance of MongoDB. Let’s create a new instance of MongoDB by restoring the snapshot data.

$ cat > px-mongo-snap-pvc << EOF

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: px-mongo-snap-clone

annotations:

snapshot.alpha.kubernetes.io/snapshot: px-mongo-snapshot

spec:

accessModes:

- ReadWriteOnce

storageClassName: stork-snapshot-sc

resources:

requests:

storage: 2Gi

EOF

$ kubectl create -f px-mongo-snap-pvc.yaml

persistentvolumeclaim "px-mongo-snap-clone" created

From the new PVC, we will create a MongoDB pod.

cat < px-mongo-snap-restore.yaml >> EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mongo-snap

spec:

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

replicas: 1

selector:

matchLabels:

app: mongo-snap

replicas: 1

template:

metadata:

labels:

app: mongo-snap

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: px/running

operator: NotIn

values:

- "false"

- key: px/enabled

operator: NotIn

values:

- "false"

spec:

containers:

- name: mongo

image: mongo

imagePullPolicy: "Always"

ports:

- containerPort: 27017

volumeMounts:

- mountPath: /data/db

name: mongodb

volumes:

- name: mongodb

persistentVolumeClaim:

claimName: px-mongo-snap-clone

EOF

$ kubectl create -f px-mongo-snap-restore.yaml

deployment.extensions "mongo-snap" created

Verify that the new pod is in running state.

$ kubectl get pods -l app=mongo-snap

NAME READY STATUS RESTARTS AGE

mongo-snap-6cd7d5b7f-gcrw2 1/1 Running 0 5m

Finally, let’s access the sample data created earlier in the walkthrough.

$ POD=`kubectl get pods -l app=mongo-snap | grep Running | grep 1/1 | awk '{print $1}'`

$ kubectl exec -it $POD mongo

MongoDB shell version v4.0.0

connecting to: mongodb://127.0.0.1:27017

MongoDB server version: 4.0.0

Welcome to the MongoDB shell.

…..

sdb.ships.find({}, {name:true, _id:false})

{ "name" : "USS Enterprise-D" }

{ "name" : "USS Prometheus" }

{ "name" : "USS Defiant" }

{ "name" : "IKS Buruk" }

{ "name" : "IKS Somraw" }

{ "name" : "Scimitar" }

{ "name" : "Narada" }

Notice that the collection is still there with the data intact. We can also push the snapshot to Amazon S3 if we want to create a Disaster Recovery backup in another Amazon region. Portworx snapshots also work with any S3 compatible object storage, so the backup can go to a different cloud or even an on-premises data center.